It was to replace those waterfalls. It was meant to increase revenue. It was meant to spark innovation. It was meant to be a solution!

Long story short; it isn’t and it’ll never!

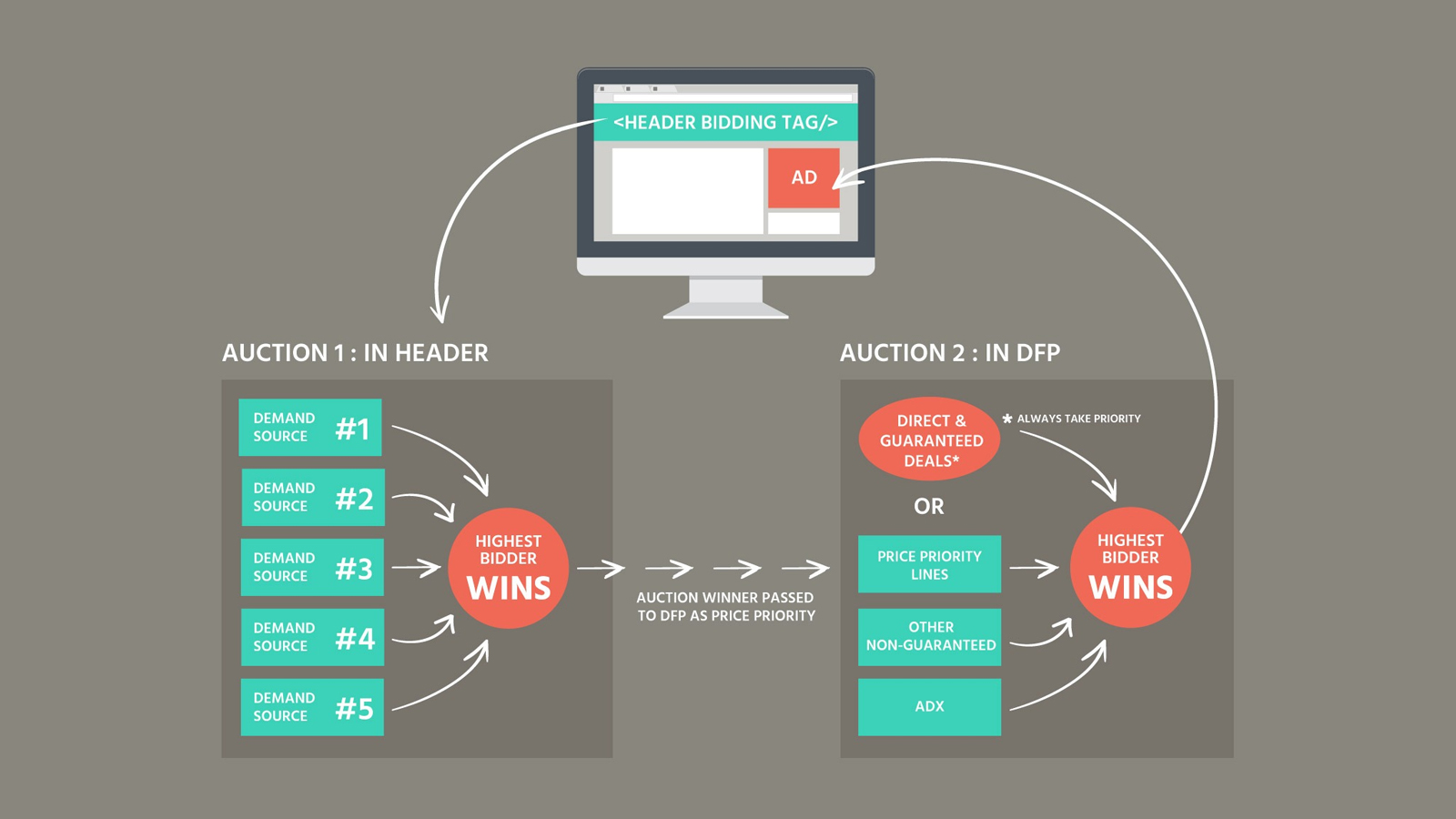

Born out of a hack, header bidding spread like wildfire. In the mad and crazy world of ad tech, no technology had ever seen such a widespread adoption and manipulation. Ever since Appnexus and others gave header bidding a shape and converted it into a technology against the duopoly of Google & Facebook, a beeline of publishers formed. The hunger to increase revenue prompted publishers to adopt header bidding overnight and monitor their reports afterwards. In the early days, it was magical. Pubs didn’t have to maintain waterfalls which worked on historical trends and no real-time data. The boost in the revenue was also remarkable and SSPs were happy as their demand increase while Google’s took a hit.

Soon many other SSPs introduced their adapters and started rapid business development around this new found gem in the old fashioned tag based ad tech industry. Ad networks ditched the traditional model and developed their own header bidding solutions that did the job for publishers who couldn’t implement header bidding for themselves. While all this was going at break neck speed, one small thing was ignored. This small factor in itself will ensure the decline of the client side header bidder.

” The Page Load Time “

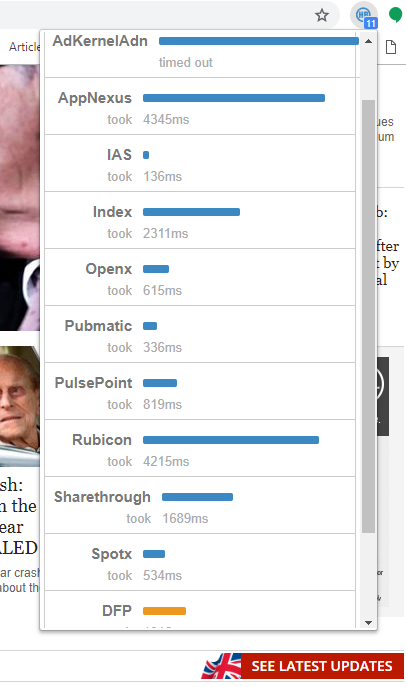

When the prebid.js script loads, it’s defers the ad units on the page to load an ad until it fetches bids from the demand partners. Until that is done, the script will hold the page at ransom while the async ad units wait for creatives. With the combined number of ad request increasing from publishers around the world, the already overloaded demand partners slow down thus responding to the ad requests slowly than they used to. This delay adds to the load time of the page and thus reduces the rank of the website. It also gives the page a jerk when the ads finally appear as the user who’s already reading the content never saw the ad. Thus ruing the user experience.

While this behavior/caveat of the HB revolution was already known to the publishers, they argued that the cost-2-benefit factor was in their favour. One thing that they didn’t realize; when everybody switches to HB, the SSPs would overload and hence would slow them down. In a study, the average response time from SSPs have increase by 25% in the last 2 years. To mitigate the huge number of ad requests, SSPs have hiked their fees at both the ends. This is done to support the infrastructure that they had to put in place to support this need.

This increasing load time is impacting the users as they face slow websites. We have seen some websites even use 17 bidder and they take forever to load. When the web is progressing to AMP pages and PWAs, header bidding is not a desirable solution and is indeed an obstruction in it’s growth. It slows down the web and the cost-2-benefit factor is now not in favor of publishers as tech fees from SSPs increase.

The Solution?

Let’s take this Server Side. When you do a server side auction, you have the control on the infrastructure. You can optimize the auction and can add infrastructure to support it. You can reduce the load at client side, thus increasing UX and decreasing page load time. When that happens, you see overall growth and not just the revenue. Who’s doing it?

Google’s Exchange bidding is one such solution. Google’s vision is a faster internet and easy access to information. With this vision in mind, Google never supported client side header bidding and instead launched their own S2S auction. Exchange bidding runs the auction on the server thus reducing the client side load time and increasing revenue. Another class of header bidding solutions is rising which is server side. OpenX has one such solution – OpenX Meta. Here, they do auction at the server. Know more

With more solutions like these coming up and the adoption increasing, client side header bidding will soon perish.

Latest blogs

5 Mistakes web publishers make with their in house AdOps.

Adoperations can be tricky. A small mistake could mean the obliteration of your website & the advertising revenue with it. See the top mistakes publishers to while running their ops in house […]

Read More